One of the more amusing words in the SQL Server synchronisation lexicon is “lightweight”. Locks bad. Nolocks good. Latches lightweight. The more spinlocks you eat, the more wait you lose!

If only things were that simple… But hey, I love the poetry of compromise. Check out the SOS_WaitableAddress for one of the many competing definitions of “lightweight”.

The lock-keeper’s cottage

All the synchronisation mechanisms I have discussed so far have one things in common: in order to be globally visible, they involve synchronisation objects embedded within the things they protect. So for instance, if we want to protect the global scheduler list with a spinlock, that spinlock lives within the global scheduler list, and allocating the spinlock’s storage (lightweight as it is) is the responsibility of whoever creates that list. But what if there were millions of things we might occasionally want to lock, and we don’t want to embed a lock within each such item due to the hassle and/or overhead involved? What if we just wanted a publicly visible corkboard upon which we can pin notes describing the items which are currently locked?

Taken to its logical extreme, this thinking gives us the storage engine’s lock manager: a single globally accessible container for locks. Just imagine how complicated life would be if each row and each key in each table had to have storage put aside for the lock someone might one day take out against it. This is the scenario we avoid by creating locks as encoded descriptions of the things being locked as and when needed – the lock manager then manages the “corkboard” and the lockable resources don’t need to concern themselves with lock semantics or storage allocation.

An SOS_WaitableAddress is related to a storage engine lock in this regard: the object is only allocated and constructed at the point when it is known to be needed, i.e. when a piece of code thinks it may want to wait on it, and someone takes care of managing the global collection of these ojects.

The nature of the beast

Consider how something like a SOS_RWLock works in terms of creating and finding it. The link between it and the object it guards could show up in one of two ways:

- Convention – everybody somehow knows where to find the lock for a given resource.

- Association – it is embedded within the protected resource, either completely or in minimal form as a pointer to the lock object. If you have a reference to the resource, you can derive a reference to its lock.

The SOS_WaitableAddress reverses the relationship by owning an abstract reference to the resource it protects, and also making itself globally discoverable through that reference. The reference takes the form of a 64-bit integer, and the logical candidate here is the address of the protected resource as a surrogate for the resource itself. Even though this is strictly speaking a pointer, we won’t use it as such; since the type of the resource is a wildcard, we can’t make assumptions about accessing members or calling methods on it.

Before we get into the nitty-gritty of how this thing works, let’s look at it from suitably high level first:

- We have a “thing” that can be waited on, identifiable by its address. This address is known as a cookie within SQLOS, although you may find it useful to think of it as a key: much like a bigint primary key, it is a readily available unique surrogate for any object.

- Anybody can register an interest in waiting on a cookie, and this doesn’t disturb or even touch the referenced resource itself. The act of constructing a SOS_WaitableAddress instance is this expression of interest.

- It is implied in the contract that the resource will broadcast a signal of the form “cookie XYZ has been signalled”, at which time any waiters can be unleashed from their waits.

- In the case where there are no interested waiters, no allocation of synchronisation objects is done. The act of signalling zero listeners is still valid.

As such, this signaling is a very close match to a non-technical understanding of an “event”, more so than the software construct we call an Event. If a tree falls in the forest, the fall is not dependent on being observed, and it won’t error for want of listeners.

The interface

The class has a constructor which takes the cookie as a parameter, and it exposes three additional methods:

- Wait(). This is a straightforward wrapper for an embedded EventInternal’s Wait() method.

- WaitAllowPrematureWakeup(). Again a wrapper for the EventInternal method.

- Signal(). This acts as you would expect, although it contains a fair bit of magic, to be described later.

- SignalCookie(), which is a static method and therefore doesn’t require a reference to a SOS_WaitableAddress, but which takes a cookie as a parameter. This will find and signal zero or more SOS_WaitableAddresses which are waiting on that cookie.

In terms of usage, it is therefore pretty straightforward and cleanly encapsulated. Take note though that there is no notion of ownership here: you will never own the tree, but you are free to act in response to the fall.

Class layout

A SOS_WaitableAddress instance needs to be constructed by an interested waiter, which then owns the task of allocating and deallocating it. Since the instance only needs to exist for as long as there is a wait, it is a perfect candidate for implementation as a local variable within the method that needs it.

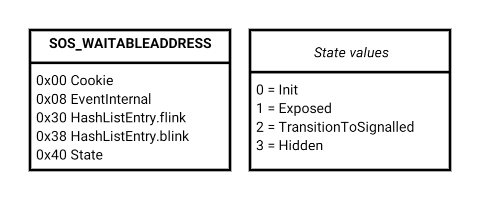

Here then is the class layout. As I have already mentioned (and as you could have expected!), an EventInternal<SuspendQSlock> beats within – I have not broken out its members, because it should by now be an old friend by now. The only thing unusual about this is its special SignalMode of 2, but this doesn’t actually confer any special behaviour.

The State member’s enumeration values give a clue to the object’s lifecycle (as usual these are my best-guess names, but they are clear enough):

- 0 – Init

- 1 – Exposed

- 2 – TransitionToSignalled

- 3 – Hidden

Both Init and TransitionToSignalled are transient, leaving Exposed and Hidden as the main states. Exposed means that the SOS_WaitableAddress is published, globally discoverable through its cookie, and ready to respond to that cookie being signalled. Once signalled (or theoretically earlier if we somehow want to drop out of the game) it changes to Hidden, meaning that the object still exists but won’t participate in any synchronisation, since it isn’t published and discoverable.

The SOS_WaitableAddressManager

I have repeatedly mentioned the concept of these synchronisation objects being “published” and “discoverable”. This is made possible by having a supervisor class, the singleton SOS_WaitableAddressManager. Its purpose is life is to:

- Own the global collection of exposed (published) SOS_WaitableAddresses

- Manage thread-safe access to that collection

- Broadcast the act of signalling to any number of SOS_WaitableAddress objects waiting on the same signaled cookie

Its methods encapsulate much of the tricky functionality that is publicly exposed by SOS_WaitableAddress methods:

- Expose() is called by the SOS_WaitableAddress constructor. It adds that newly created instance to the global collection and upgrades Status from Init to Exposed.

- Hide() is called by the SOS_WaitableAddress destructor, and removes that instance from the global collection. If it is currently in the Exposed state, the transition is immediate, and if it is already Hidden (due to being signaled by another thread) nothing needs doing. However, as an edge case, if it is currently in TransitionToSignalled the method will spin a bit, interspersed with SwitchToThread() pauses in the manner of a spinlock, until the state transition to Hidden is observed.

- Signal() will signal all SOS_WaitableAddresses waiting on the specified cookie. If passed a pointer to an SOS_WaitableAddress instance (as called by SOS_WaitableAddress::Signal()), it will have a shortcut to the first one to signal; if this pointer is omitted (the static SOS_WaitableAddress::SignalCookie()), it must first do a search to see if there are any listeners for this cookie whatsoever.

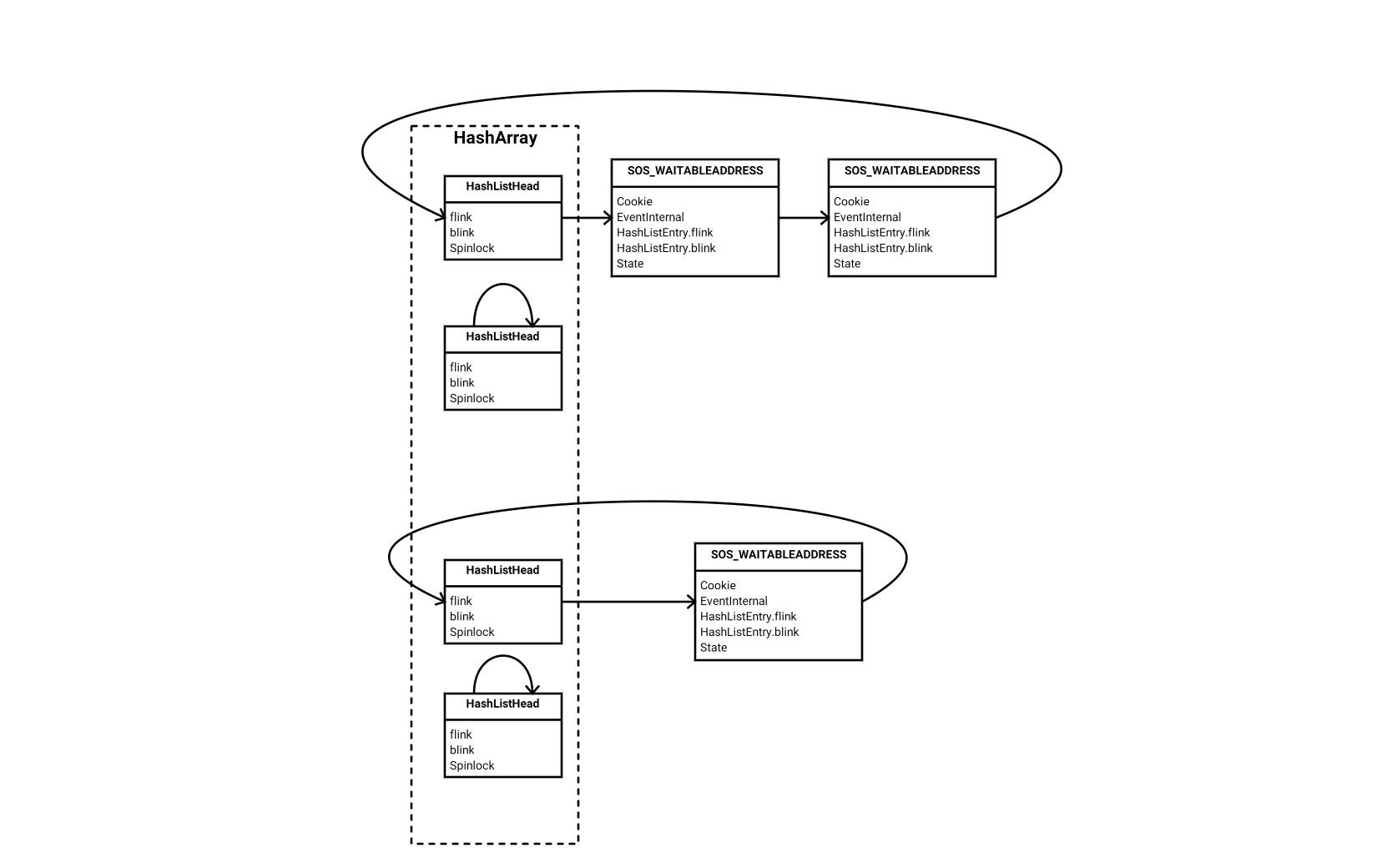

The crown jewel in the manager class’s data members is an array of list head structures representing hash buckets. The number of hash buckets will always be a power of 2, and on my 2014 instance I found 2048 buckets – this may or may not be variable across environments or versions. Each array entry is a simple three-member structure containing a flink, a blink and a spinlock of SOS_WAITABLE_ADDRESS_HASHBUCKET flavour.

As simplified illustration of how this all fits together, let’s pretend we only have four hash buckets. Two of them are empty, one contains one item, and one contains two items. Here is what the array of linked lists would look like:

Meet a hash table

So the global collection of “discoverable” items is in fact a hash table. Since it is the first time we have come across one of these in the series, let’s savour the moment and get to know it better.

One simple way of keeping a collection of key-identifiable objects is to put them into a linked list. For a small number of items, this works very well, but the cost of finding any item increases linearly with the size of the list that needs to be traversed. Another option is to keep them in sorted order within a tree structure; this allows quick searching, but has a high degree of complexity and management overhead.

The hash table sits somewhere in between these, containing a predetermined number of hash “buckets”, with each bucket being a linked list in this case. The item’s key determines which bucket it belongs to, and with the right combination of hashing algorithm, bucket count and luck, you’d end up with a very small number of list entries in each bucket’s linked list. The cost of access consists of a very small fixed cost of hashing and getting to the list head for that hash bucket, plus a linked list traversal cost which is proportional to the list length within that bucket.

This particular implementation has 2048 (= 2 ^ 11) hash buckets, and therefore the hashing function has to reduce a 64-bit cookie to an 11-bit cookie hash. While the items within a given linked list aren’t ordered, the SOS_WaitableAddressManager does apply a rule of keeping items for the same cookie next to each other. This simplifies things when that cookie is signalled, because the Signal() method only needs to search for the first list entry matching the supplied cookie, and then it can restrict the check for further matches to immediately adjacent list entries. If this call came via the instance method SOS_WaitableAddress::Signal(), no searching for the first matching entry is necessary, because we already have it in hand (remember that it’s trivial to translate between a list entry and its containing record and vice versa). However, if the call came via the static method SOS_WaitableAddress:SignalCookie() we have a known cookie but no known list entry, so the list must be traversed to find that first matching entry, or to confirm that there are in fact no items with that cookie.

A secondary advantage of this hash table implementation is spinlock partitioning. If there was a single linked list protected by a single spinlock, we’d be in for a double whammy:

- Everybody would be contending on that single spinlock

- Once someone has acquired it, they could hold onto it for quite a while if there are lot of items to traverse in that single list. The length of time the spinlock is held then compounds the problem of a single point of contention.

However, here we have 2048 hash buckets, each being a list head with its own spinlock protecting that bucket. The chances of two threads trying to access the same bucket at the same time is vastly diminished, and the length of time they might contend during a chance meeting is reduced to the time it takes to traverse a (hopefully) very short list.

In terms of managing this collection, things are again rather upside-down compared to the SOS_RWLock. For the latter, the lock can be long-lived, and it is the list of waiters that gets managed. Each instance of a wait gets its own waiter structure inserted in that lock’s waiter list, and upon completion of the wait the waiter structure is discarded while the lock remains ready to accept further waiters. For an SOS_WaitableAddress though, it is the whole object which gets evicted from this global list (“hidden”) upon completion of a wait.

Picking up on one of my first points, one of the most significant things defining this synchronisation method is to consider what it looks like when there are no interested (potential) waiters. An SOS_RWLock must remain in place forever while the resource it protects is salient, even if nobody wants to wait on it. In contrast, nothing is allocated and constructed for a cookie until somebody decides it’s worth waiting on it. Apart from the performance benefit, this gives some great freedom of extensibility: the participating resources have no need to be aware that they are used in this piece of synchronisation choreography.

Example from the wild

The class does actually play a supporting role in the storage engine lock manager, but that gets a bit complicated. Let’s instead look at the WaitForDone() method in the good old SOS_Task class.

This has a very typical signature for a wait method: a timeout in milliseconds, and a pointer to an SOS_WaitInfo structure to track the wait against a wait type. Internally it constructs a SOS_WaitableAddress as a local variable, with the address of a structure within the task as a cookie. The act of construction exposes the object in the waitable address manager’s hash table, i.e. the SOS_WaitableAddress gets inserted into the linked list for the hash bucket corresponding to its cookie hash. This (i.e. its embedded EventInternal) then gets waited upon before the method can return, and everything is torn down after the wait completes, whether a successful wait or not.

To exercise the method, I put a breakpoint on sqldk!SOS_Task::WaitForDone(), crossed my fingers, started a long-running parallel query and aborted it. This is the point where one half expects to find “Paul and Adam were here” graffiti…

The top of the call stack as it hit the breakpoint:

sqldk!SOS_Task::WaitForDone sqlmin!SubprocessMgr::AbortAndWaitForSubprocs sqlmin!CQueryInstance::AbortSubprocsAndWait sqlmin!CQueryInstance::AbortSubprocess sqlmin!CQueryInstance::ShutdownParallel sqlmin!CQueryScan::DestroyQueryOnException sqlmin!CXStmtQuery:ShutdownOnException sqlmin!CXStmtQuery:FinishOnExceptionImp sqllang!rc4 MSVCR100!_CallSettingFrame

I want to call out one item from higher up the call stack. The abort is done in this case by raising an actual exception, which then gets processed through the C++ run-time library and caught within the CXStmtQuery class. Fast-forward to the point where we stopped, and we see that it is the SubProcessMgr class which owns the job of setting up the call to WaitForDone(). It initialises an SOS_WaitInfo with a wait type of EXECSYNC, which explains why the above call stack partly corresponds to examples here.

The knowledge that this wait ended up getting labelled as EXECSYNC also illustrates again how synchronisation mechanisms are orthogonal to wait type analysis. It is quite possible (I haven’t confirmed one way or the other) that there are some instances of EXECSYNC using synchronisation classes other than the SOS_WaitableAddress. Additionally, when you are trying to troubleshoot a performance problem, it is probably rare that the means of synchronisation is nearly as important as the reason for synchronisation. Finally, these call stacks (both my example and the SQLskills ones) don’t make it clear that a SOS_WaitableAddress is involved. Because Wait() is just a springboard to the equivalent EventInternal call, and uses a tail call (a jmp rather than a call), the stack trace for a wait will only make the EventInternal visible, and not the mechanism built on top of it.

Conclusion

This very interesting class has opened up a few new areas for exploration, although the practical uses (the lock manager and parallel query synchronisation) lie beyond the deeper levels of SQLOS, taking us into the storage engine. I shall try and stay within SQLOS for a bit longer, but those areas beckon, so who knows…

Very informative this series is undoubtedly.

Please dont mind most of the people in Sql world are not from programming area so they find it very difficult to instill these things. I myself has read this article numerous times but still am unable to completely digest the things. article is bit difficult to understand. So my humble request is please also add broad summary and action should be taken from our point of view. We will be highly obliged.

Regards,

Nick